Can AI handle legal questions yet? We have compared the capabilities of the older and newer large language models (LLMs) on English and Welsh insolvency law questions, as a continuation of the Insolvency Bot project.

Thomas Wood tried asking several LLMs a series of questions about insolvency law set by insolvency expert Eugenio Vaccari, designed to be about undergraduate level. We tested older LLMs such as GPT-3.5 as well as newer entrants such as DeepSeek.

We tried using the LLMs “off the shelf” with no modification (our control), and then as a comparator, we also tried including relevant English and Welsh case law, statutes, and forms from HMRC in the prompt. For an example, instead of asking an LLM

I have X debts and Y happened. Should I close my company?

we can ask the LLM,

The Insolvency Act 1986 Section 123 states that [paragraph]. The Companies Act 2006 Section 456 states that [paragraph]. This Supreme Court ruling is relevant: [ruling]. I have X debts and Y happened. Should I close my company?

In other words, we do the legwork of looking up the relevant information and stuffing it into the prompt, and the LLM just has to do what it’s good at, namely formulating sentences. This technique of adding extra text to a prompt is called retrieval augmented generation, or RAG. What’s cool about RAG, is that the user doesn’t need to see it.

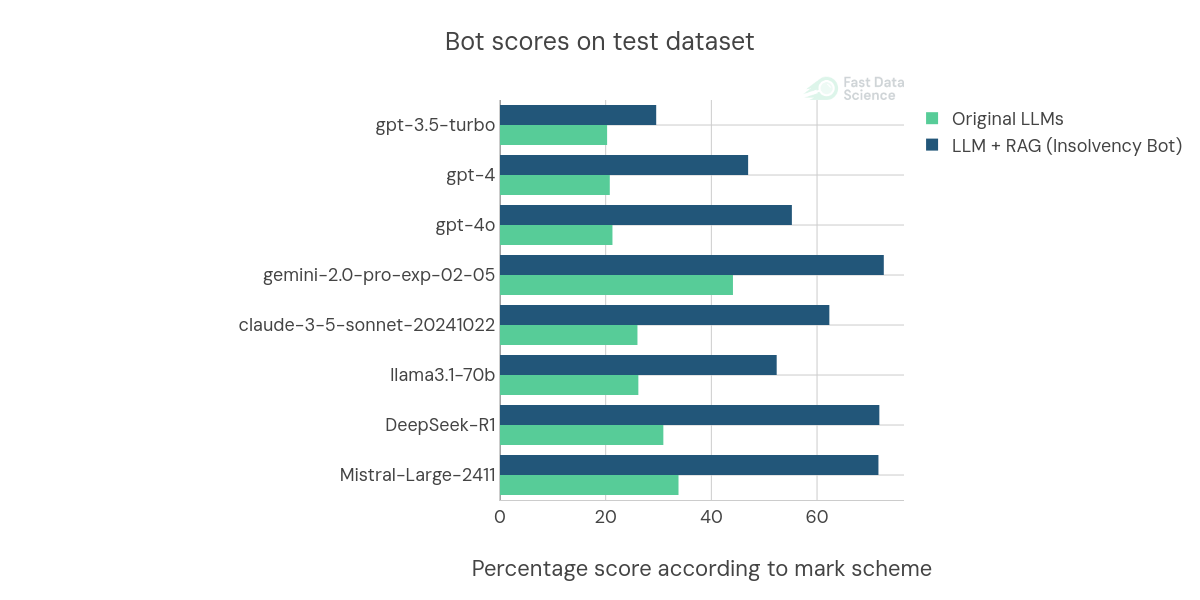

We found that over the last few years, the more advanced LLMs have actually had a bigger improvement due to the extra information that we included in the prompt. LLMs are constantly improving in their unmodified form, but you can see clearly in this time series plot that RAG has become more effective over time.

The models released in 2025, such as DeepSeek and the current iteration Gemini, now outperform the earlier GPT-3.5 by a large margin. This was not surprising. But what is unexpected is that the RAG-augmented models have even more of an edge over their non-RAG counterparts, than they did one or two years ago.

So we built a RAG system long before Google Gemini or DeepSeek came out, and it has performed far better on those models than on any model we had access to back in 2023 when we developed the system. Any ideas why this could be? Contact us and let us know your thoughts!

The pace of improvements is also astounding. Could we be facing a new Moore’s law in AI?

You can read our original paper (which predates the release of DeepSeek) here:

And you can try the Insolvency Bot here: https://fastdatascience.com/insolvency

Looking for experts in Natural Language Processing? Post your job openings with us and find your ideal candidate today!

Post a Job

Many companies and organisations have large datasets that are stored in a very unstructured format. For example, you could work for a US based healthcare provider or insurer and have patient records stored in a free text format such as HL7 files or PDFs. A building regulator, land registry, or mortgage provider may have texts and accompanying diagrams from thousands of building inspections or land title deeds. A patent attorney’s office may have records of patent applications in PDF format.

On 20 May, I attended the Expert Witness Conference in Dublin, Ireland, organised by La Touche Training. It was an eye opening event with a mixture of lawyers and expert witnesses in different fields from Ireland and abroad. The event was chaired by Mr Justice Michael Peart, with a keynote address by the Honourable Mr Justice David Barniville, President of the High Court of Ireland.

Fast Data Science at Ireland’s Expert Witness Conference on 20 May 2026 in Dublin Links to guidance on legal AI issued by legal authorities and other organisations Official guidance UK: Artificial Intelligence (AI) Guidance for Judicial Office Holders, 31 October 2025. https://www.judiciary.uk/wp-content/uploads/2025/10/Artificial-Intelligence-AI-Guidance-for-Judicial-Office-Holders-2.pdf

What we can do for you