Natural language processing (NLP) is revolutionising how businesses interact with information. But large language models, or LLMs (also known as generative models or GenAI) can sometimes struggle with factual accuracy and keeping up with real-time information.

If ChatGPT was trained on data until a certain year, how can it answer questions about events that happened after the cutoff point?

Retrieval-augmented generation (RAG) allows LLMs such as ChatGPT to stay up to date in their responses.

Natural language processing

Remember the old mobile phones which completed a sentence by taking into account the previous words? That’s all the LLMs are doing.

An LLM is a super-powered autocomplete. It excels at understanding language patterns but can lack domain-specific knowledge. LLMs are notorious for hallucinating when they don’t know the answer.

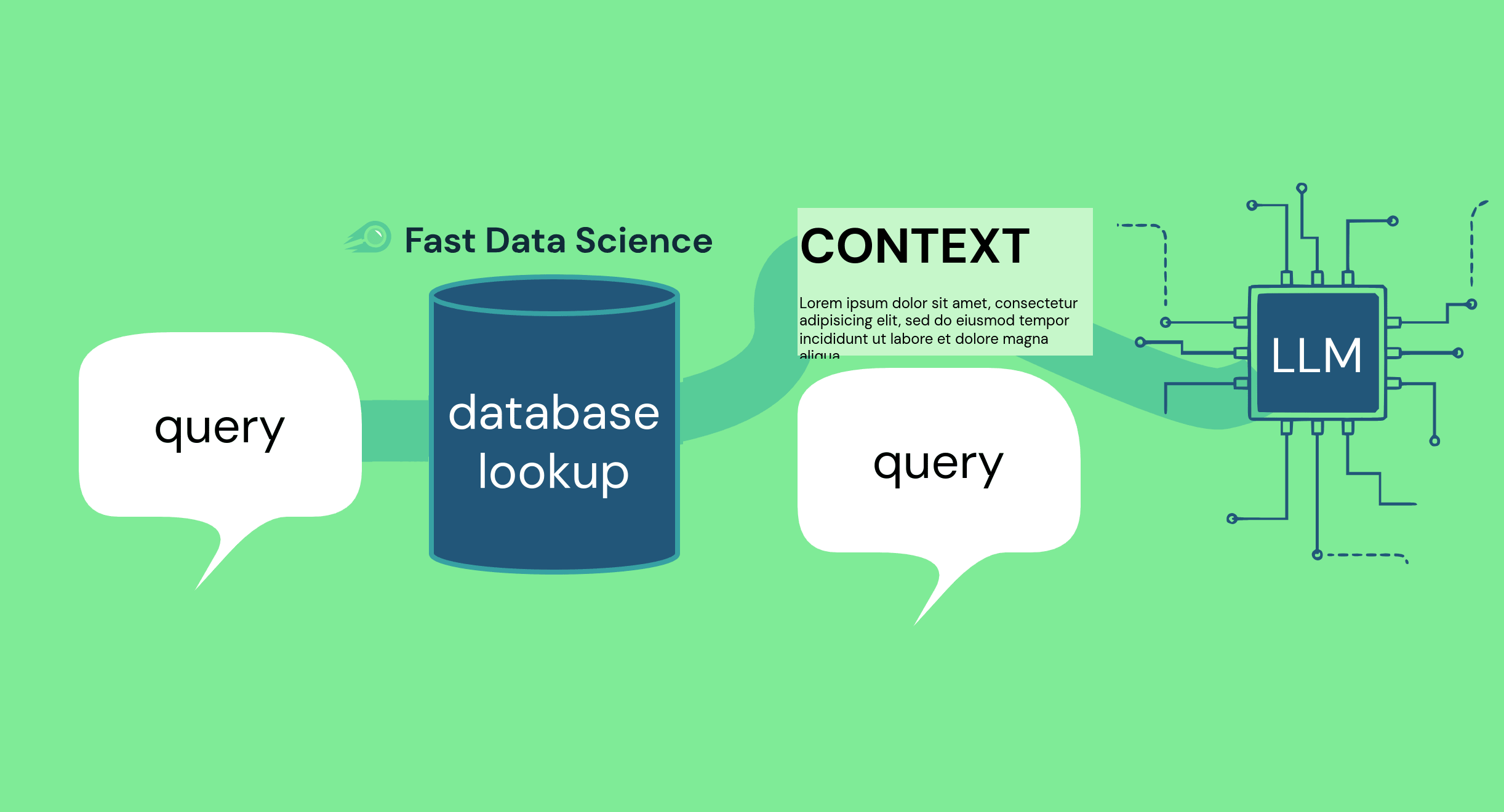

We can mitigate the problem of hallucinations and inaccuracies by taking the user prompt, and leveraging an external knowledge base and prepending or appending some useful information which we think the LLM should know, before we pass the prompt to the LLM. For example, if the user has a query about English insolvency law, we can send the user’s original question, together with some relevant information retrieved from a database.

Modifying the prompt sent to an LLM is also called prompt engineering.

With RAG, we augment the request by retrieving relevant documents from the knowledge base and feeding them to the LLM along with the original prompt. This empowers the LLM to generate more accurate and up-to-date responses.

A demonstration of the Insolvency Bot, a use case of RAG (retrieval augmented generation) in the legal domain.

Here’s how RAG and prompt engineering can benefit businesses:

Real-world applications of retrieval augmented generation

The Future of NLP

RAG represents a significant step forward in NLP. By combining the power of LLMs with external knowledge, businesses can unlock new levels of efficiency, accuracy, and cost-effectiveness in information retrieval. As technology evolves, RAG is poised to play a central role in the future of human-computer interaction.

Dive into the world of Natural Language Processing! Explore cutting-edge NLP roles that match your skills and passions.

Explore NLP Jobs

This is an article based on my presentation on “The Role of Artificial Intelligence in Expert Investigations and the Preparation of reports” which I gave at the Expert Witness Conference on 20 May 2026.

Many companies and organisations have large datasets that are stored in a very unstructured format. For example, you could work for a US based healthcare provider or insurer and have patient records stored in a free text format such as HL7 files or PDFs. A building regulator, land registry, or mortgage provider may have texts and accompanying diagrams from thousands of building inspections or land title deeds. A patent attorney’s office may have records of patent applications in PDF format.

On 20 May, I attended the Expert Witness Conference in Dublin, Ireland, organised by La Touche Training. It was an eye opening event with a mixture of lawyers and expert witnesses in different fields from Ireland and abroad. The event was chaired by Mr Justice Michael Peart, with a keynote address by the Honourable Mr Justice David Barniville, President of the High Court of Ireland.

What we can do for you