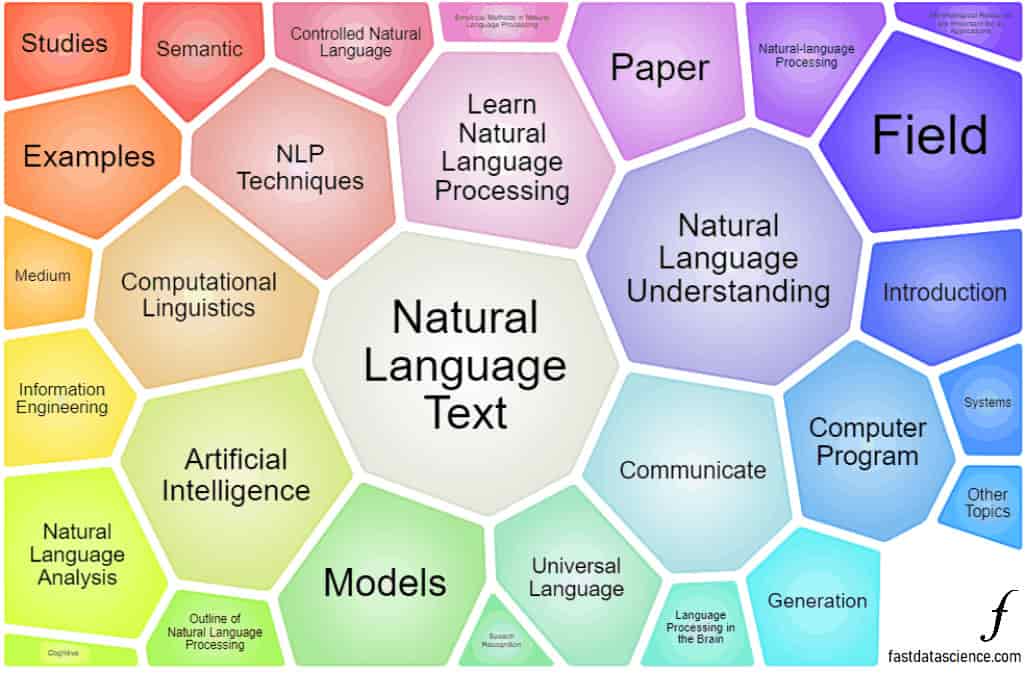

Our main area of focus is natural language processing (NLP). The manager, Thomas Wood, studied a Masters in 2008 at Cambridge University in Computer Speech, Text and Internet Technology and since then he has been working exclusively in machine learning and mostly in NLP. In 2018, he founded Fast Data Science to deliver data science consultancy, focusing on NLP.

We have built NLP pipelines from scratch, and worked on natural language dialogue systems, document classifiers and text based recommender systems. For these tasks we have used both traditional machine learning techniques as well as the state of the art such as neural networks. We normally use Python for our NLP work.

Example applications of natural language processing include:

Below you can see a representation of some technical terms used in a dataset of clinical trial documents in 3D space.

Words with similar meanings and usages are close together. Words are colour-coded into clusters which correspond to groups such as diseases (cluster 3), verbs (clusters 1, 6 and 8), etc. If you move the mouse over a word, you can see that word’s cluster number, and the word’s nearest neighbours. A word’s nearest neighbours tend to be words with similar meaning or function, such as synonyms.

This is a demonstration of how natural language processing can be used to find synonyms and common topics in a completely new set of text documents, in totally unsupervised fashion.

The word vectors were calculated in 128 dimensions using word embeddings on Google Cloud Platform and reduced to three dimensions using t-SNE. The words were assigned to 15 clusters using the k-Means clustering algorithm.

Fast Data Science - London

Today many companies, in particular in certain industries such as healthcare, pharmaceuticals, legal, and insurance, have large amounts of unstructured data. This is typically data in text format, which may even be unscanned documents, PDFs, HTML, or any other file type.

Unstructured data is very difficult to deal with but can contain a goldmine of information. Fast Data Science specialises in extracting value from organisations’ unstructured datasets.

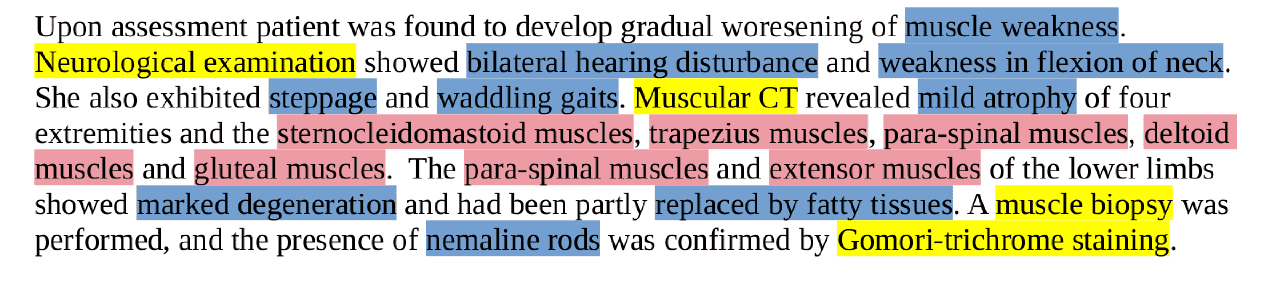

AI and natural language processing are being increasingly adopted across the healthcare sector. This technology is sometimes called healthtech or MedTech. NLP is being used to compare and detect changes in clinical reports, extract clinical concepts such as MeSH terms from electronic medical records, and develop human-to-machine natural language dialogue systems to improve the healthcare experience.

We have worked on a number of projects in healthcare, including:

We do a lot of natural language processing with Python. We have worked on a variety of NLP models, including:

Topic detection is an NLP technique that allows you to discover common themes in a set of unstructured documents.

We work with whichever frameworks and languages meet the client’s requirements, for example

NLP projects we have worked on for major household names, multinationals and startups include

Contact us to hire an NLP data scientist today!

We have tested and evaluated 16 Large Language Models here: https://fastdatascience.com/generative-ai/openai-vs-claude-vs-qwen/

There is not much difference between the most recent state-of-the-art large language models. There is a much greater difference in capability between models that were released 6 months apart, than there is between models from different vendors. We found that Chinese models such as Deepseek and Qwen performed the same as the US models like ChatGPT and Gemini.

In general, the off the shelf models from the big tech companies also outperform any fine-tuned custom model that you or we (outside big tech companies) have the capability of building. The resources that have gone into training an LLM from scratch are comparable to the GDP of a small country. So even if it appears worthwhile to fine tune a mental health, medical, or financial model, it usually isn’t the case.

Our advice when choosing a large language model provider for your application, is to pick whichever one is most convenient for you to integrate into your technology stack, while avoiding vendor lock in. Try to ensure that you will always be able to switch easily to a different provider in future, because an API may become deprecated, or a company may stop offering a particular model, or they may increase their prices. So maximum flexibility is key.

Fast Data Science is an NLP company.

What we can do for you